FAIR DATA Fund use case

The NERVE project: a neuromorphic radar and vision ensemble dataset for distance sensing and people detection

Authors: Amirreza Yousefzadeh (FAIR Data Fund grantee, University of Twente) and team made of Omar Mansour, Pietro Martinello, Ethan milon, Guangzhi Tang, Manolis Sifalakis, YingFu Xu

Editor: Iulia Popescu

Detecting a person’s position accurately is crucial for the safety of automated systems in human-machine interactions. For instance, in industrial settings, co-robots working alongside human technicians must precisely identify and adapt to human movements to ensure seamless collaboration. Similarly, self-driving cars must accurately locate pedestrians to navigate safely through urban environments.

Conventional solutions of 3D sensing require high-resolution distance sensors which are usually expensive and costly to process. This is why there is a need for low-cost and fast solutions for distance-sensing and people-detection.

A new approach

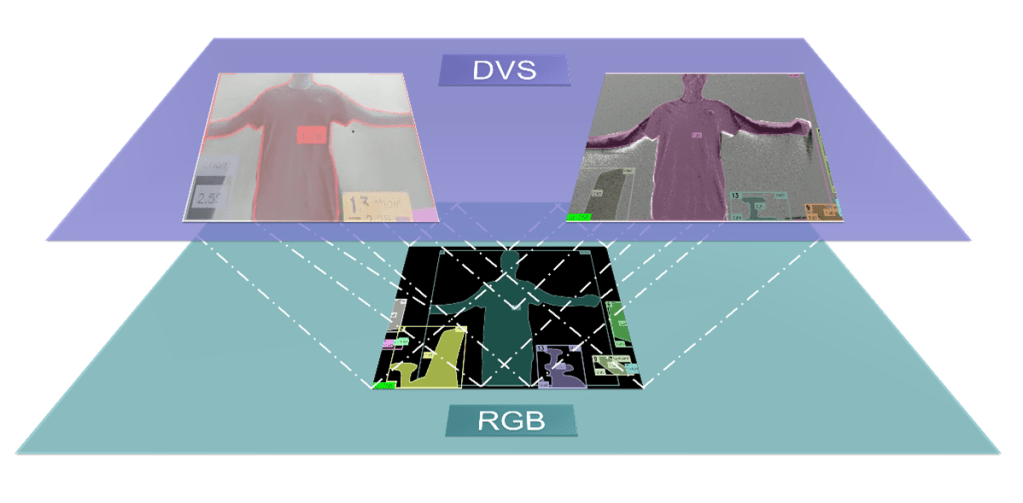

Sensor fusion enhances performance by integrating multimodal sensory data, surpassing what could be achieved using any individual sensor alone. Hence, it is possible to achieve fast distance-sensing by fusing information from multiple sensors.

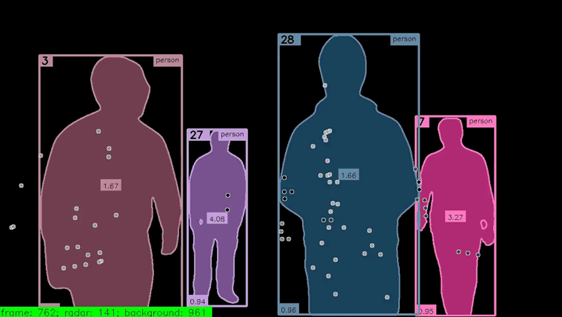

In support of this approach, the NERVE neuromorphic sensor fusion dataset combines low resolution FMCW radars with low-latency neuromorphic cameras. The dataset is tailored for multimodal people-detection and distance-sensing with ground truth labels generated from an accurate RGB camera and LiDAR sensor, ensuring reliable data for testing purposes. This project explored the feasibility of accurate distance-sensing by fusing event-based neuromorphic cameras with FMCW radars using deep learning approaches.

Dataset Verification

The verification process of the dataset has made use of the extensive sensor diversity, allowing for seamless spatial mapping between sensors of different resolutions and allowing for a precise temporal alignment of all sensors.

FAIR Data Fund comes in handy

The FAIR Data Fund helped to work in a much more structural and strategic way of organizing data; and using metadata and thinking more broadly about problems such as adaptability and usability. The team concludes… “in our opinion, FAIR principles are not only about creating transparent datasets, it is about looking left and right on how everyone regardless of their affiliations not only likes to work but should work in order to guarantee the most optimal results. Thank you to the 4TU.ResearchData team for their professionalism and amazing support with our project!”

Dataset available onto the 4TU.ResearchData repository: https://data.4tu.nl/private_datasets/yiqrxzXEiRQ7Bjtbf9McXbwBJFYq5w9DLxID2cdI1h8